Hi there,

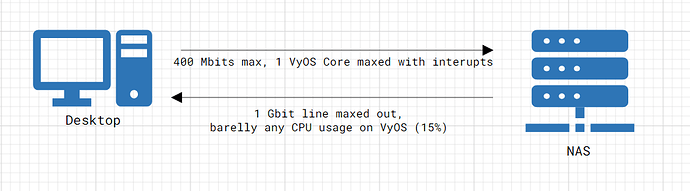

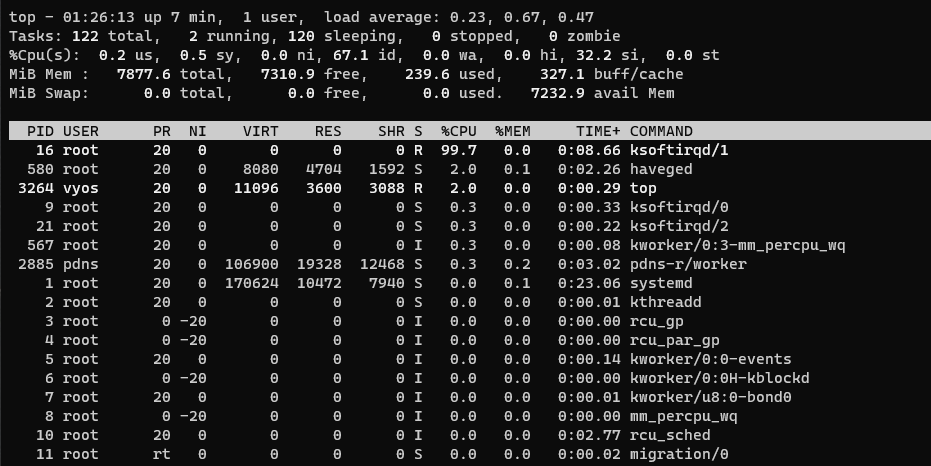

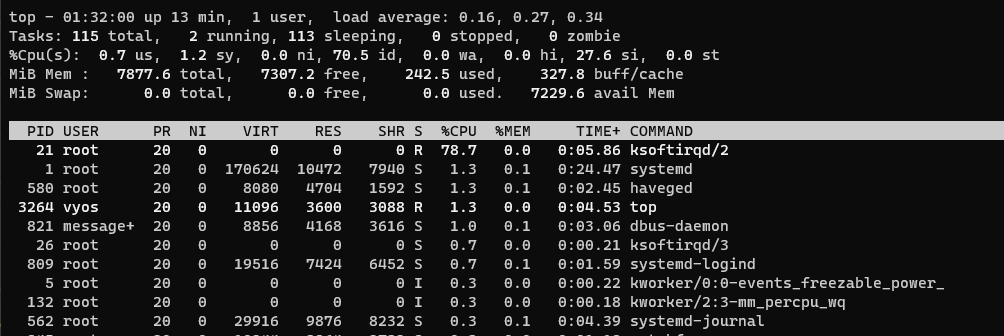

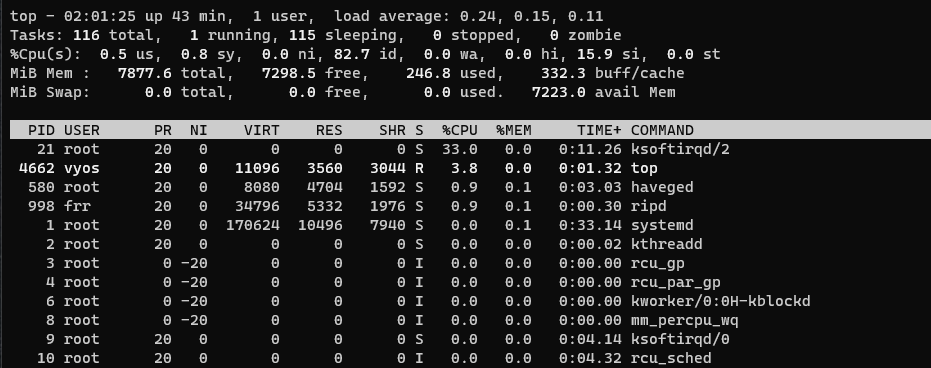

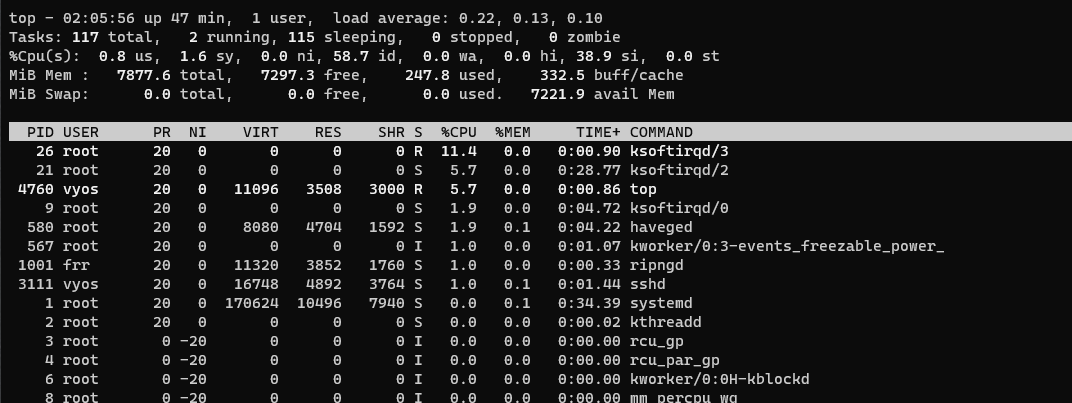

I’m running into a performance issue when routing data between 2 subnets.

I can reproduce it with iPerf3 and its strange as it only happens in 1 way.

So, the issue is happening when routing TCP data between 2 subnets/Vlans.

My Desktop is on subnet A and my NAS is on subnet B.

I have GRO, GSO, SG And TSO offloads enabled and I have fine performance on everything except on traffic going from Subnet A (Vlan50) to Subnet B(Vlan1).

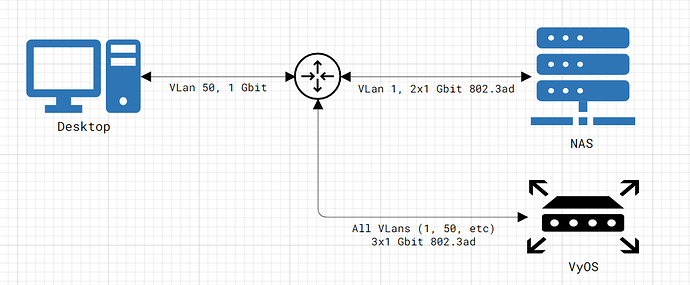

Here is my setup:

- Desktop connected to switch over 1Gbit LAN.

- VyOS box connected to switch over 3x1Gbit link 802.3ad LAGG, carrying all traffic over multiple VLans.

- NAS connected to switch over 2x1Gbit link 802.3ad LAGG.

- Switch is a Netgear GS724Tv4

- The 2 subnets work on separate VLans, NAS is on VLan 1 and Desktop on VLan 50.

- There is no firewall rules between the Subnets.

Here is a visual representation:

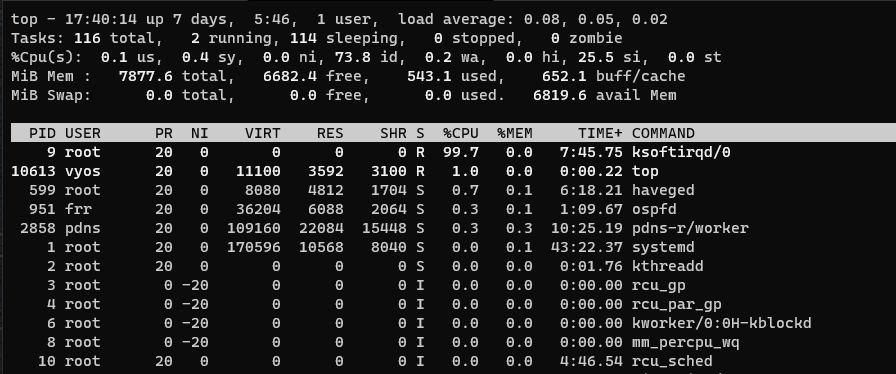

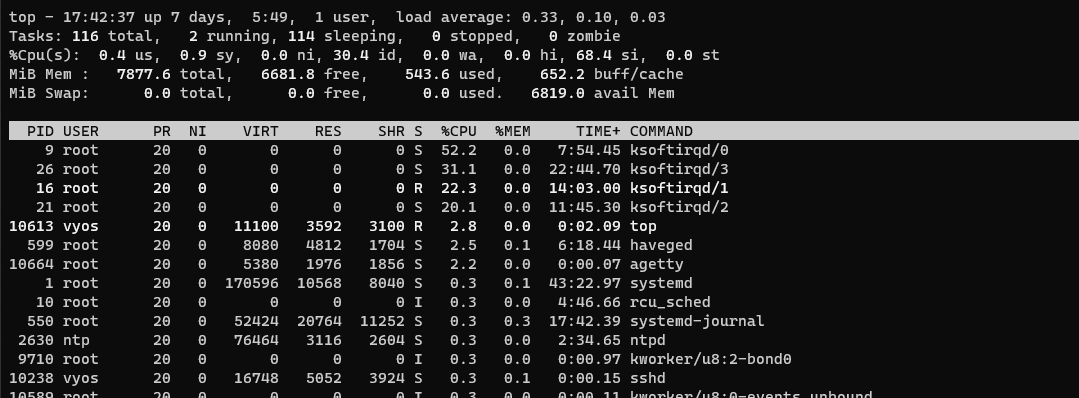

My thoughts and tests on points above:

- If the problem was with the lag between VyOS and the switch, I would expect both traffic directions to be affected.

- Not sure if this could affect something, but is strange that it would, still is a LAGG into a LAGG. I don’t know enough to know possible issues from this. I’ve tested with a laptop running on the VLan 1 and it can communicate fine with the NAS at full link speed (this bypasses VyOS as its the same VLan and subnet).

Additionally, I’ve checked and both VyOS, the Router and my Desktop are set to 1500MTU, with the Switch configured to allow Max Frame size of 1518.

Any ideas what could affect the routing only 1 way or troubleshooting I can do?